Call or WhatsApp us anytime

Mail Us For Support

The rules of digital visibility have fundamentally changed. When someone asks ChatGPT, Perplexity, or Google’s AI Overview a question about your industry, is your brand part of the answer? If not, you’re invisible to a rapidly growing segment of potential customers who never see traditional search results.

Large Language Models now process over 3 billion prompts monthly through ChatGPT alone. Google’s AI Overviews appear in approximately 16 percent of all queries. Perplexity recorded 153 million website visits in May 2025, representing growth exceeding 190 percent year-over-year. These platforms don’t display ten blue links; they synthesize one authoritative answer. The content sources they cite become the default authorities in their respective domains.

Getting cited by LLMs differs fundamentally from traditional search engine optimization. Research analyzing over 18,000 queries found that only 12 percent of URLs cited by large language models appear in Google’s top ten results. Nearly 90 percent of ChatGPT citations originate from positions beyond rank 21 in traditional search rankings. This disconnect reveals a critical insight: ranking high on Google no longer guarantees visibility in AI-generated answers.

This guide presents ten evidence-based strategies for earning LLM citations, drawn from academic research, industry studies analyzing 680 million citations, and documented patterns across ChatGPT, Perplexity, Google AI Overviews, and Gemini. These methods work because they align with how large language models retrieve, evaluate, and attribute information.

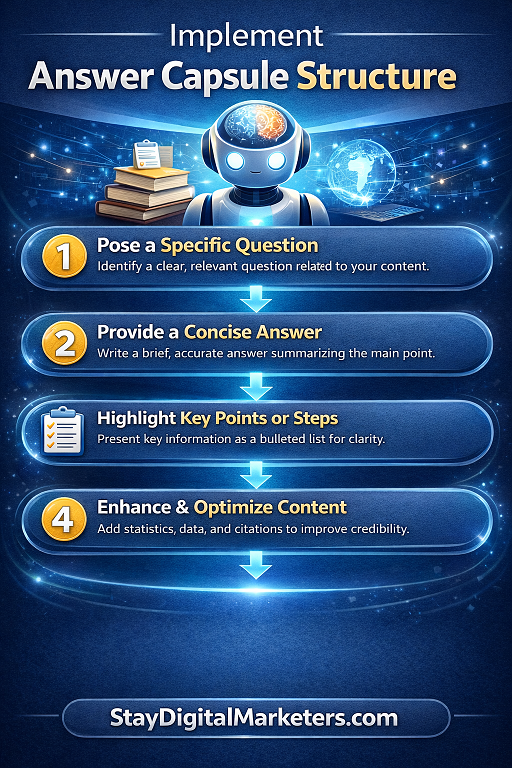

Strategy 1: Implement Answer Capsule Structure

Answer capsules represent the single most predictive factor for LLM citation. An answer capsule is a self-contained explanation of approximately 20 to 25 words placed directly after a question-based heading. This format provides large language models with cleanly extractable information they can confidently cite.

Analysis of blog content receiving ChatGPT referrals revealed that answer capsule presence was the most consistent predictor of citation. More than 86 percent of cited posts contained answer capsules, while only 13 percent of cited content lacked both capsules and proprietary insights. The pattern held across industries and content types.

The structure works because it reduces the cognitive effort required for extraction. When an LLM scans your content, it searches for passages that directly answer specific questions. Answer capsules provide exactly that—a concentrated, quotable response positioned where models expect to find it.

How to Create Effective Answer Capsules

Structure your content with question-based H2 headings that mirror natural language queries. Immediately below each heading, place a concise answer of 40 to 60 words that could stand alone as a complete response. This answer should be link-free, as research shows more than 90 percent of cited capsules contain no internal or external links. Place supporting links below the capsule or in subsequent paragraphs.

The capsule should deliver high-confidence information using clear, declarative language. Avoid hedging phrases like “it depends” or “there are many factors” in your opening answer. Lead with the core response, then expand with context, examples, and qualifications in the paragraphs that follow.

For maximum effectiveness, embed one unique data point or proprietary insight within or immediately after the capsule. Even a small owned insight dramatically boosts citation potential by transforming generic answers into authority assets that models prioritize for attribution.

Strategy 2: Produce Original Research and First-Party Data

Original research earns citations at dramatically higher rates than aggregated content. According to analysis of ChatGPT’s top 1,000 citations, 67 percent reference original research, first-hand data, or academic sources—content types most marketing teams don’t produce. This represents the single largest opportunity gap in content strategy.

Large language models prioritize primary sources because they establish authority and provide verifiable information other creators reference. When you publish original surveys, proprietary datasets, or unique industry benchmarks, you become the source of truth in your domain. Other websites cite your data, creating a citation network that signals credibility to retrieval systems.

The impact extends beyond direct citations. Original research attracts organic backlinks from journalists, bloggers, and industry publications seeking credible data for their content. These third-party mentions compound your authority footprint, making your brand increasingly likely to appear in AI-generated answers across related topics.

Creating Citation-Worthy Research

Original research doesn’t require massive budgets or academic rigor. Customer surveys with 100 to 200 responses generate valuable benchmarks. Usage data from your product creates unique insights your competitors cannot replicate. Annual industry reports compiling trends and statistics establish topical authority.

Present your findings in formats that maximize extractability. Lead with an executive summary containing your headline statistics. Use comparison tables showing year-over-year changes or competitive benchmarks. Create standalone charts and graphs with descriptive captions that explain what the data reveals.

Name your research frameworks and methodologies to create citation-worthy entities. The terminology you establish becomes the language others use when discussing your concepts, naturally leading to brand mentions in subsequent AI-generated content.

Strategy 3: Structure Content as Listicles and Comparison Tables

Content formatting significantly impacts citation likelihood. Listicles account for 50 percent of top AI citations, while tables and structured data formats receive citations at rates 2.5 times higher than unstructured prose. These formats work because they create clear extraction boundaries and explicit data relationships that reduce interpretation work for large language models.

When LLMs scan content, they perform pattern matching to identify relevant information segments. Numbered lists signal discrete, complete units of information. Tables provide explicit relationships between entities, features, and attributes. Both formats enable models to extract specific elements with high confidence about context and meaning.

The preference for structured formats reflects how language models construct responses. Rather than reproducing entire pages, they stitch together relevant segments from multiple sources. Content pre-organized into cleanly delineated sections makes this assembly process more reliable, increasing the probability your specific points get selected and attributed.

Implementing High-Performance Formats

Transform existing long-form content into scannable structures. Convert paragraph-based explanations into bullet lists highlighting key points. Replace prose comparisons with comparison tables showing features, pricing, or specifications side by side. Use numbered steps for processes and sequential instructions.

For product comparisons and buying guides, create detailed tables comparing alternatives across relevant decision criteria. These comparison assets consistently earn citations because they concentrate valuable information in formats that match how users formulate queries and how models construct comprehensive answers.

Maintain depth within structured formats. Each list item should contain 50 to 100 words of explanation, not just single sentences. Structured doesn’t mean superficial—it means organizing substantial information in ways that facilitate extraction and attribution.

Strategy 4: Lead with Direct Answer-First Formatting

Answer-first formatting places your response in the first 40 to 60 words of each content section, before any introductory context or background information. This inverted pyramid approach enables AI systems to extract answers directly without parsing through setup and preamble.

Evidence demonstrates the impact of this structural choice. After optimizing content for answer-first formatting using FAQ Schema, documented results showed Featured Snippet rates increasing from 8 percent to 24 percent over five months—a threefold improvement. ChatGPT citations for the same content increased 140 percent, from 5 to 12 citations across 100 test queries.

The technique works because it mirrors the structure of training data that large language models encounter most frequently. Encyclopedia entries, academic abstracts, and news articles all lead with conclusions before providing supporting details. Content structured this way feels familiar to retrieval systems, making extraction and attribution more straightforward.

Applying Answer-First Architecture

Review your content at the section level. Identify the core answer each section provides, then restructure to place that answer in the opening paragraph immediately after the heading. Move background information, historical context, and qualifying details to subsequent paragraphs.

Your opening answer should function as a standalone TL;DR that fully resolves the section’s central question. A reader should be able to scan only the headings and first paragraphs of your content and understand your complete argument. This scanability benefits both human readers and machine readers.

Pair answer-first structure with semantic cues that help models identify your main points. Use phrases like “in summary,” “the key takeaway,” “most importantly,” and “the direct answer” to signal where your concentrated insights appear. These verbal markers function as extraction hints for retrieval systems.

Strategy 5: Optimize for Content Freshness and Regular Updates

Recency signals carry significantly more weight in AI citation than traditional SEO. Research found that 76.4 percent of ChatGPT’s most-cited pages were updated in the last 30 days. Analysis of AI Overview citations revealed that 85 percent come from content published in the last two years, with 44 percent from 2025 alone. URLs cited in AI results average 25.7 percent fresher than sources in traditional search results.

Large language models prioritize current information because accuracy matters more than authority when answering time-sensitive questions. An outdated article from an authoritative domain loses to a recently updated page from a moderate-authority source when the query involves current state, recent developments, or evolving best practices.

This creates both challenge and opportunity. Content that performed well for years in traditional search can suddenly disappear from AI citations if its publish date appears stale. Conversely, regularly updated content from newer sites can earn citations despite lacking the backlink profiles and domain authority traditional SEO demands.

Implementing Effective Content Refresh Strategies

Establish a quarterly review cycle for your highest-traffic content. Update statistics with current data, replace outdated examples with recent scenarios, and add sections covering new developments since the original publication. Change the publish date to reflect the update, and ensure this date appears in your structured data markup.

Add temporal markers throughout your content. Replace phrases like “recently” or “in recent years” with specific dates and timeframes. Include publication and last-updated dates prominently at the top of articles. These explicit temporal signals help models assess whether your information remains current for a given query.

Create annual or quarterly reports that supersede previous versions. Rather than updating the same URL indefinitely, publish fresh reports with new URLs that explicitly indicate their time period. This establishes a publishing pattern that signals ongoing expertise and commitment to current information.

Strategy 6: Implement Comprehensive Schema Markup

Structured data provides explicit entity relationships and content attributes that AI systems can extract with high confidence. Products with comprehensive schema markup appear in AI recommendations three to five times more frequently than those without. LLMs grounded in knowledge graphs achieve 300 percent higher accuracy compared to models relying only on unstructured data.

Schema markup doesn’t guarantee citation, but it reduces ambiguity about your content’s meaning and structure. Without schema, language models must interpret your content contextually. With properly implemented structured data, you provide machine-readable descriptions of exactly what your page contains, who created it, and how different entities on the page relate to each other.

The value extends beyond direct content understanding. Schema creates connections between your content and broader knowledge graphs that language models reference during retrieval. When your entities align with established knowledge base entries, models can verify your information more easily, increasing citation confidence.

Implementing Citation-Friendly Schema

Prioritize schema types that establish content and entity relationships. Implement Article schema with author, publisher, publish date, and modified date properties. Use Organization schema to define your brand identity with logo and sameAs profiles linking to authoritative sources about your company. Add Person schema for authors with credentials and roles.

For content addressing specific question types, implement specialized schema. Use FAQ schema for question-answer pairs, HowTo schema for step-by-step processes, and Product schema for items you review or recommend. Each specialized type provides additional context clues that improve retrieval relevance.

Use JSON-LD format for implementation, as this is Google’s preferred structured data method and the format most reliably parsed by various AI systems. Validate your schema implementation using Google’s Rich Results Test and Schema.org validators to ensure proper syntax and completeness.

Strategy 7: Build Topical Authority Through Content Clusters

Topical clustering establishes domain expertise by creating a comprehensive knowledge hub around specific subjects. This approach involves publishing one authoritative pillar page covering a topic broadly, supported by multiple cluster pages addressing narrower subtopics in depth, all connected through strategic internal linking using consistent anchor text.

Content clusters outperform isolated articles for AI citation because they establish canonical pages that retrieval systems can select with confidence. When models encounter multiple pages from the same domain addressing related aspects of a query, they interpret this as evidence of focused expertise rather than keyword-chasing opportunism.

Research indicates that 82.5 percent of AI citations link to nested pages rather than homepages. Deep content that addresses specific subtopics earns citations more reliably than homepage-level overview content. Clusters create the navigational structure and internal link context that helps models identify your most relevant deep pages for specific queries.

Architecting Effective Topic Clusters

Begin by identifying your core topics—the three to five areas where you genuinely possess deep expertise and serve customer needs. For each core topic, create a comprehensive pillar page covering fundamental concepts, key questions, and overview information.

From each pillar, develop five to ten cluster pages addressing specific subtopics, use cases, or questions in detail. Link from the pillar to each cluster page using descriptive anchor text containing the target query phrase. Link back from cluster pages to the pillar, and create lateral links between related cluster pages.

Maintain entity consistency across your cluster by using identical terminology for key concepts throughout all related pages. This semantic coherence helps language models understand topic relationships and recognize your collective content as a unified knowledge resource, rather than a collection of disconnected articles.

Strategy 8: Earn Third-Party Mentions and Citations

External mentions significantly amplify citation likelihood. Analysis shows AI Overviews cite content through external sources at rates 6.5 times higher than through direct discovery alone. When authoritative publications, industry blogs, or community discussions mention your brand and link to your content, this creates multiple pathways for LLM discovery and validation.

The relationship between external mentions and AI citations differs from traditional backlink equity. Rather than passing PageRank-style authority, third-party citations establish that multiple independent sources consider your content reference-worthy. This pattern recognition signals quality to retrieval systems more powerfully than single-site authority signals.

Reddit demonstrates this dynamic clearly, accounting for 40.1 percent of LLM citations according to research, far exceeding its representation in traditional search results. When real users organically discuss and link to your content in community contexts, language models interpret this as a strong signal of practical utility and trustworthiness.

Strategies for Earning External Citations

Focus on creating assets that other content creators want to reference. Original research, industry benchmarks, comprehensive guides, and unique frameworks naturally attract citations when they provide information unavailable elsewhere. Make these assets easy to cite by creating clean URLs, providing embed codes for graphics, and summarizing key findings in shareable formats.

Engage authentically in relevant online communities where your audience asks questions. Contribute genuinely helpful answers on Reddit, Quora, and niche forums, linking to your detailed content when it provides comprehensive information. This builds organic mention patterns that language models recognize as valuable community knowledge.

Develop relationships with journalists and industry publications by positioning yourself as a reliable expert source. Original research with newsworthy findings attracts media coverage, creating high-authority citations that cascade into improved AI visibility. Guest contributions to respected publications build your citation network while establishing credentials.

Strategy 9: Use FAQ Formatting and Question-Answer Structure

FAQ formatting creates perfect extraction targets for language models. Question-answer structure maps directly to how users formulate prompts and how AI systems construct responses. Content organized as explicit questions with direct answers performs exceptionally well across all major AI platforms.

Well-optimized FAQ pages rank 47 percent higher in AI-generated search responses compared to narrative content covering the same information. The format works because it eliminates structural ambiguity—the question clearly defines scope, and the answer provides the targeted information without requiring contextual interpretation.

FAQ structure also captures long-tail conversational queries effectively. When users ask AI assistants complex or specific questions, models search for content addressing those exact formulations. FAQ sections covering diverse related questions create multiple matching opportunities that topical articles with single focus areas cannot achieve.

Creating Citation-Ready FAQ Content

Develop comprehensive FAQ sections addressing the full spectrum of questions your audience asks about your topic. Use actual customer questions from support tickets, sales calls, and social media as the foundation. These authentic questions reflect real language patterns that users employ when querying AI systems.

Format each FAQ item with the question as a subheading and the answer as the immediately following paragraph. Keep answers focused and complete within 75 to 150 words. For complex questions requiring longer explanations, provide a direct answer first, then expand with additional context.

Implement FAQ schema markup for these sections to provide an explicit machine-readable structure. The schema makes extraction unambiguous by labeling which text constitutes the question and which text provides the answer, improving both citation accuracy and attribution likelihood.

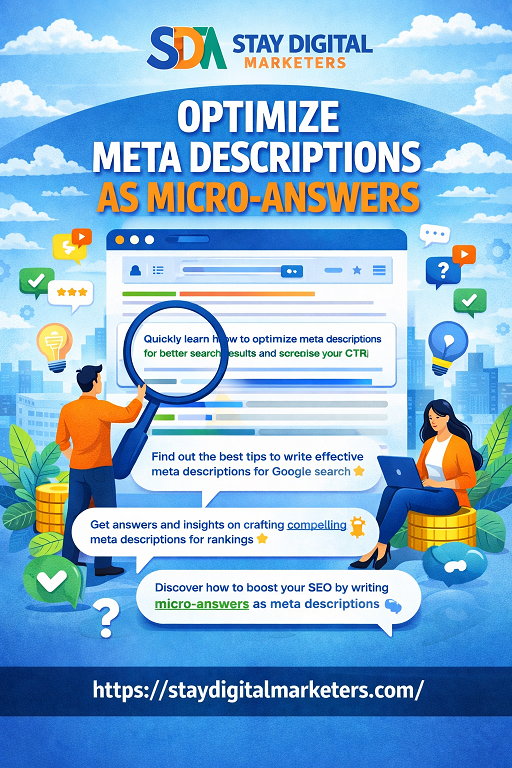

Strategy 10: Optimize Meta Descriptions as Micro-Answers

Meta descriptions function differently in AI-driven search than in traditional SEO. Rather than serving primarily as SERP snippet preview text, they operate as high-signal, machine-readable content summaries that help language models quickly assess page relevance and determine citation appropriateness for specific queries.

Effective meta descriptions for LLM optimization read like micro-answers rather than marketing taglines. They should concisely state what specific question or topic the page addresses and preview the unique value or perspective provided. Vague promotional language reduces citation potential by obscuring actual content utility.

The ideal AI-optimized meta description follows a formula: core topic identification, key information preview, and unique value indicator. It should function as a standalone capsule that helps both humans and machines immediately understand whether the full page addresses their specific information need.

Writing Citation-Optimized Meta Descriptions

Begin meta descriptions by clearly stating the core entity and user intent your page addresses. Use specific terminology rather than generic category terms. Include your target keyword naturally within the first 100 characters, as this portion receives the most weight in relevance assessments.

Incorporate a specific fact, statistic, or insight in your meta description that previews unique value. This glimpse of original information signals that your page offers more than generic rehashing of common knowledge. The preview functions as a quality indicator that encourages both clicks and citations.

Keep meta descriptions between 140 and 160 characters to maximize display across platforms while maintaining focus. Every word should contribute to communicating page relevance and value—eliminate filler phrases, brand names unless essential for context, and generic descriptors that could apply to any page.

Measuring and Monitoring LLM Citation Performance

Implementing these strategies requires tracking whether they actually improve your AI visibility. Traditional SEO metrics like rankings and organic traffic don’t capture LLM citation performance. You need new measurement approaches that assess how frequently and prominently your content appears in AI-generated answers.

Several platforms now offer LLM citation monitoring capabilities, though the space remains emerging. Tools like Profound, Semrush’s LLM tracking features, and specialized services like AnswerLens provide visibility into which content earns citations across different AI platforms. These tools track citation frequency, share of voice in your category, and sentiment in how your brand gets presented.

Manual monitoring remains valuable for understanding qualitative aspects of citations. Regularly query major AI platforms with questions your content addresses and note which sources get cited. When competitors appear consistently while your brand remains absent, this signals topics requiring optimization attention. Track not just whether you get cited, but also the accuracy of how your information gets represented and the context in which your brand appears.

Key Metrics for AI Visibility

Citation rate measures what percentage of relevant queries that result in your content being cited or mentioned. This becomes your primary visibility metric, analogous to impression share in paid search. Track this across different query categories to identify where your content performs strongest and which topics need reinforcement.

Share of voice quantifies your citation frequency relative to competitors in your space. If queries about your industry generate 100 citations total and yours represents 15, you hold a 15 percent share of voice. Growing this metric indicates improving position as a category authority in AI-generated content.

Attribution quality assesses how your citations appear in context. Are you cited as the primary source or mentioned among many alternatives? Does the AI accurately represent your information or introduce errors? Is sentiment positive, neutral, or negative? Quality matters as much as quantity for building brand authority through AI channels.

Implementation Roadmap

Implementing these ten strategies effectively requires prioritization and systematic execution. Begin with your highest-traffic, most important content rather than attempting to optimize everything simultaneously. This focused approach demonstrates results faster and builds organizational momentum.

Start with quick wins that require minimal resource investment. Adding answer capsules to existing content takes hours rather than weeks. Restructuring content for answer-first formatting requires editing but not creating new material. Implementing schema markup for key pages delivers immediate technical improvements.

Progress to medium-effort strategies like building comprehensive FAQ sections and establishing content refresh cycles. These require more planning and ongoing commitment but generate compounding returns as your content stays current and captures more long-tail queries through question-answer matching.

Reserve highest-effort strategies like producing original research and building complete topic clusters for your core competitive areas. These investments deliver the strongest citation advantages but demand significant resources to execute properly. Focus them where they create maximum strategic value for your business.

Most importantly, recognize that getting cited by LLMs represents a fundamental shift in content strategy, not a tactical checklist. The brands earning consistent citations share a common characteristic: they focus on becoming the best source of information in their domain rather than gaming algorithmic preferences. They publish content worth citing because it provides unique value, demonstrates genuine expertise, and helps people make better decisions.

The rise of large language models hasn’t overturned SEO fundamentals—it has refined and reinforced them. Content that succeeds in earning citations possesses the same qualities that always drove sustainable search performance: authoritative information, clear communication, user-focused structure, and genuine expertise. The difference now is that these qualities matter more than ever before, as AI systems prove increasingly effective at distinguishing authentic knowledge resources from keyword-optimized filler.

Your competitive advantage comes from committing to these principles completely. While others chase algorithmic shortcuts, you become the source that deserves citation. That positioning compounds over time as training data, retrieval patterns, and brand recognition all reinforce your authority. The brands visible in AI-generated answers three years from now are those making strategic investments in citation-worthy content today.